Multimodal AI Courses

6 courses570K learners4 providers

Explore AI systems that process and generate multiple data types simultaneously, including text-image models, vision-language models, and unified architectures like GPT-4V and Gemini.

AllVision-Language ModelsText-to-ImageImage-to-TextAudio-Visual LearningCross-Modal Retrieval

Editor's Picks

Top Rated in Multimodal AI

Stanford Online

Free

advanced

Natural Language Processing with Deep Learning

Stanford Online

10 weeksadvanced

Free

DeepLearning.AI

Free

intermediate

Finetuning Large Language Models

DeepLearning.AI

1 hourintermediate

Free

fast.ai

Free

intermediate

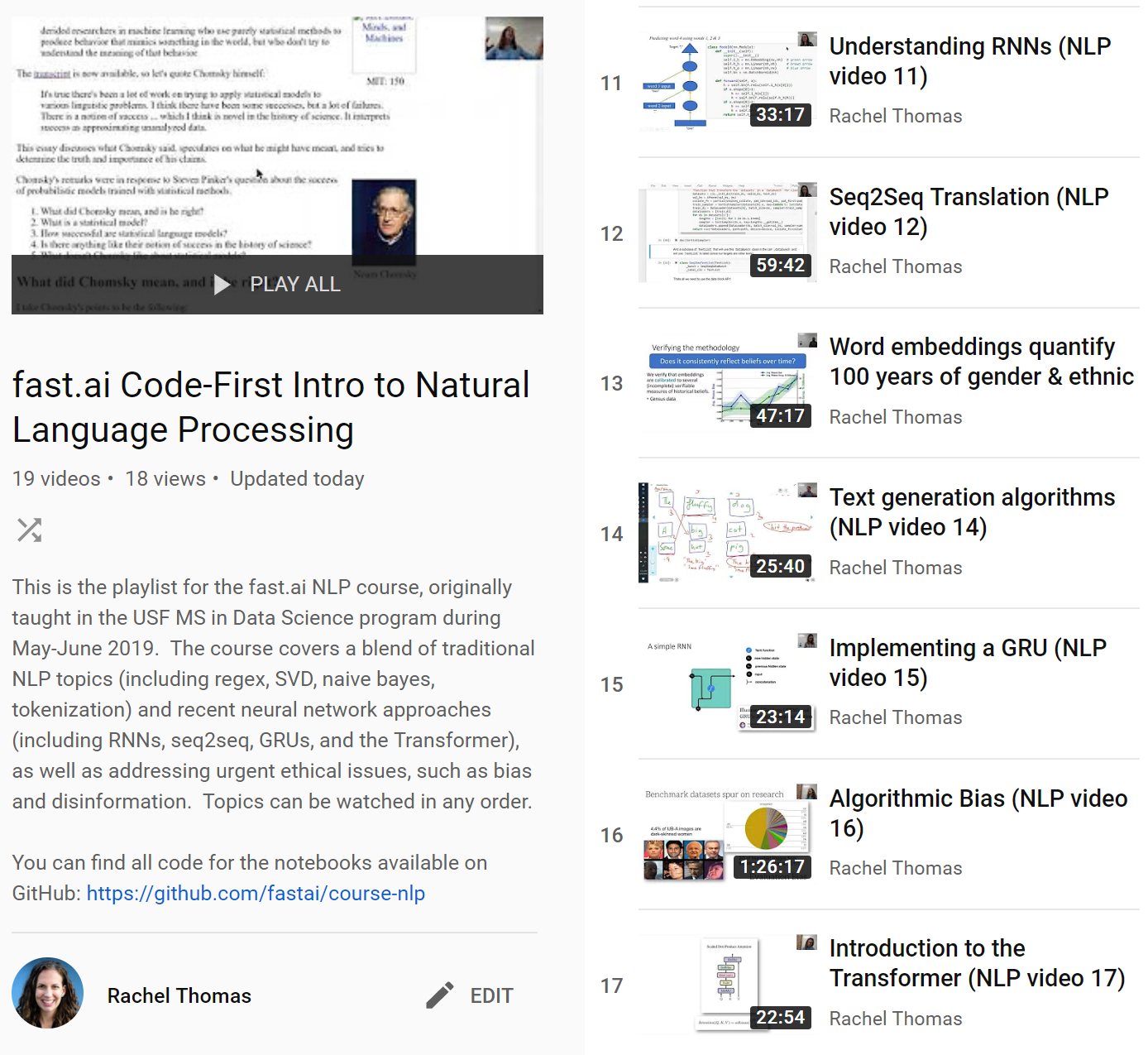

A Code-First Introduction to NLP

fast.ai

6 weeksintermediate

Free

All Multimodal AI Courses

Stanford Online

Free

advanced

Natural Language Processing with Deep Learning

Stanford Online

10 weeksadvanced

Free

DeepLearning.AI

Free

intermediate

Finetuning Large Language Models

DeepLearning.AI

1 hourintermediate

Free

DeepLearning.AI

Free

intermediate

Building Multimodal Search and RAG

DeepLearning.AI

1 hourintermediate

Free

fast.ai

Free

intermediate

A Code-First Introduction to NLP

fast.ai

6 weeksintermediate

Free

DeepLearning.AI

Free

intermediate

Large Language Models with Semantic Search

DeepLearning.AI

1 hourintermediate

Free

Google Cloud

Free

beginner

Introduction to Gemini API

Google Cloud

3 hoursbeginner

Free

Browse Multimodal AI Courses by Provider

See multimodal ai courses from a specific platform.

Frequently Asked Questions

What is multimodal AI?

Multimodal AI systems process and understand multiple types of data — text, images, audio, and video — simultaneously. Models like GPT-4V and Gemini can reason across modalities for richer understanding.

How is multimodal AI different from unimodal AI?

Unimodal AI processes one data type (e.g., text-only NLP). Multimodal AI combines multiple inputs — for example, analyzing an image and answering questions about it using both visual and textual understanding.

What are the applications of multimodal AI?

Applications include visual question answering, image captioning, video understanding, document analysis, medical imaging with clinical notes, and creative tools that combine text and image generation.

What models should I learn for multimodal AI?

Start with CLIP for vision-language understanding, then explore GPT-4V, Gemini, and LLaVA. For generation, study Stable Diffusion and DALL-E for text-to-image capabilities.